Hello and welcome to Eye on AI. In this edition…the U.S. Census Bureau finds AI adoption declining…Anthropic reaches a landmark copyright settlement, but the judge isn’t happy…OpenAI is burning piles of cash, building its own chips, producing a Hollywood movie, and scrambling to save its corporate restructuring plans…OpenAI researchers find ways to tame hallucinations…and why teachers are failing the AI test.

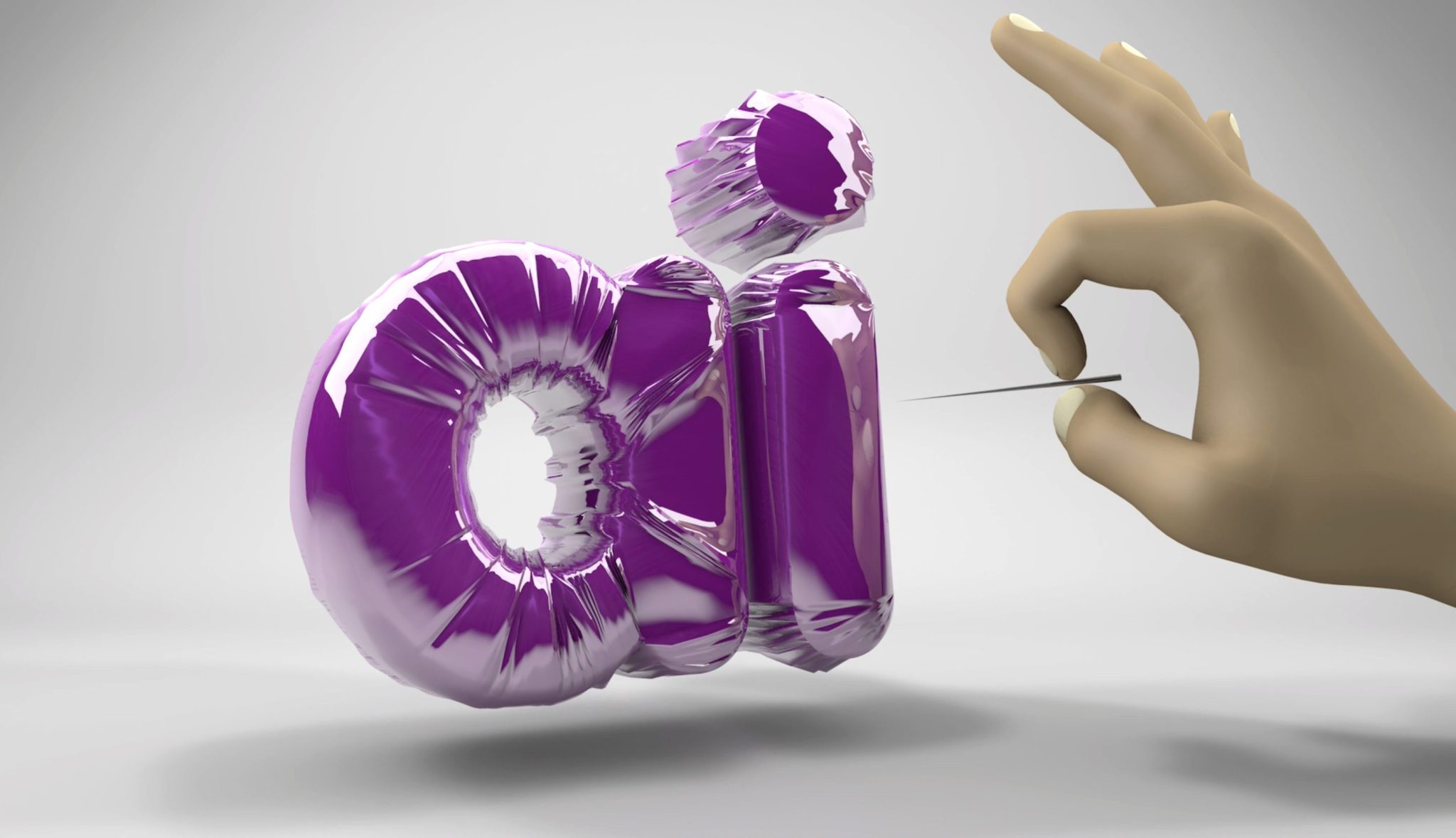

Concerns that we are in an AI bubble—at least as far as the valuations of AI companies, especially public companies, is concerned—are now at a fever pitch. Exactly what might cause the bubble to pop is unclear. But one of the things that could cause it to deflate—perhaps explosively—would be some clear evidence that big corporations, which hyperscalers such as Microsoft, Google, and AWS, are counting on to spend huge sums to deploy AI at scale, are pulling back on AI investment.

So far, we’ve not yet seen that evidence in the hyperscalers’ financials, or in their forward guidance. But there are certainly mounting data points that have investors worried. That’s why that MIT survey that found that 95% of AI pilot projects fail to deliver a return on investment got so much attention. (Even though, as I have written here, the markets chose to focus only on the somewhat misleading headline and not look too carefully at what the research actually said. Then again, as I’ve argued, the market’s inclination to view news negatively that it might have shrugged off or even interpreted positively just a few months back is perhaps one of the surest signs that we may be close to the bubble popping.)

This week brought another worrying data point that probably deserves more attention. The U.S. Census Bureau conducts a biweekly survey of 1.2 million businesses. One of the questions it asks is whether, in the last two weeks, the company has used AI, machine learning, natural language processing, virtual agents, or voice recognition to produce goods or services. Since November 2023—which is as far back as the current data set seems to go—the number of firms answering “yes” has been trending steadily upwards, especially if you look at the six-week rolling average, which smooths out some spikes. But for the first time, in the past two months, the six-week rolling average for larger companies (those with more than 250 employees) has shown a very distinct dip, dropping from a high of 13.5% to more like 12%. A similar dip is evident for smaller companies too. Only microbusinesses, with fewer than four employees, continue to show a steady upward adoption trend.

A blip or a bursting?

This might be a blip. The Census Bureau also asks another question about AI adoption, querying businesses on whether they anticipate using AI to produce goods or services in the next six months. And here, the data don’t show a dip—although the percentage answering “yes” seems to have plateaued at level below what it was back in late 2023 and early 2024.

Torsten Sløk, the chief economist at the investment firm Apollo who pointed out the Census Bureau data on his company’s blog, suggests that the Census Bureau results are probably a bad sign for companies whose lofty valuations depend on ubiquitous and deep AI adoption across the entire economy.

Another piece of analysis worth looking at: Harrison Kupperman, the founder and chief investment officer at Praetorian Capital, after making what he called a “back-of-the-envelope” calculation, concluded that the hyperscalers and leading AI companies like OpenAI are planning so much investment into AI data centers this year alone that they will need to earn $40 billion per year in additional revenues over the next decade just to cover the depreciation costs. And the bad news is that total current annual revenues attributable to AI are, he estimates, just $15 billion to $20 billion. I think Kupperman may be a bit low on that revenue estimate, but even if revenues were double what he suggests (which they aren’t), it would only be enough to cover the depreciation cost. That certainly seems pretty bubbly.

So, we may indeed be at the top of the Gartner hype cycle, poised to plummet down into “the trough of disillusionment.” Whether we see a gradual deflation of the AI bubble, or a detonation that results in an “AI Winter”—a period of sustained disenchantment with AI and a funding desert—remains to be seen. In a recent piece for Fortune, I looked at past AI winters—there have been at least three since the field began in the 1950s—and tried to draw some lessons about what precipitates them.

Is an AI winter coming?

As I argue in the piece, many of the factors that contributed to previous AI winters are present today. The past hype cycle that seems perhaps most similar to the current one took place in the 1980s around “expert systems”—though those were built using a very different kind of AI technology from today’s AI models. What’s most strikingly similar is that Fortune 500 companies were excited about expert systems and spent big money to adopt them, and some found huge productivity gains from using them. But ultimately many grew frustrated with how expensive and difficult it was to build and maintain this kind of AI—as well as how easily it could fail in some real world situations that humans could handle easily.

The situation is not that different today. Integrating LLMs into enterprise workflows is difficult and potentially expensive. AI models don’t come with instruction manuals, and integrating them into corporate workflows—or building entirely new ones around them—requires a ton of work. Some companies are figuring it out and seeing real value. But many are struggling.

And just like the expert systems, today’s AI models are often unreliable in real-world situations—although for different reasons. Expert systems tended to fail because they were too inflexible to deal with the messiness of the world. In many ways, today’s LLMs are far too flexible—inventing information or taking unexpected shortcuts. (OpenAI researchers just published a paper on how they think some of these problems can be solved—see the Eye on AI Research section below.)

Some are starting to suggest that the solution may lie in neurosymbolic systems, hybrids that try to integrate the best features of neural networks, like LLMs, with those of rules-based, symbolic AI, similar to the 1980s expert systems. It’s just one of several alternative approaches to AI that may start to gain traction if the hype around LLMs dissipates. In the long run, that might be a good thing. But in the near term, it might be a cold, cold winter for investors, founders, and researchers.

With that, here’s more AI news.

Jeremy Kahn

[email protected]

@jeremyakahn

Correction: Last week’s Tuesday edition of the newsletter misreported the year Corti was founded. It was 2016, not 2013. It also mischaracterized the relationship between Corti and Wolters Kluwer. The two companies are partners.

Before we get to the news, please check out Sharon Goldman’s fantastic feature on Anthropic’s “Frontier Red Team,” the elite group charged with pushing the AI company’s models into the danger zone—and warning the world about the risks it finds. Sharon details how this squad helps Anthropic’s business, too, burnishing its reputation as the AI lab that cares the most about AI safety and perhaps winning it a more receptive ear in the corridors of power.

FORTUNE ON AI

Companies are spending so much on AI that they’re cutting share buybacks, Goldman Sachs says—by Jim Edwards

PwC’s U.K. chief admits he’s cutting back entry-level jobs and taking a ‘watch and wait’ approach to see how AI changes work—by Preston Fore

As AI makes it harder to land a job, OpenAI is building a platform to help you get one—by Jessica Coacci

‘Godfather of AI’ says the technology will create massive unemployment and send profits soaring — ‘that is the capitalist system’—by Jason Ma

EYE ON AI NEWS

Anthropic reaches landmark $1.5 billion copyright settlement, but judge rejects it. The AI company announced a $1.5 billion deal to settle a class action copyright infringement lawsuit from book authors. The settlement would be one of the largest copyright case payouts in history and amounts to about $3,000 per book for nearly 500,000 works. The deal, struck after Anthropic faced potential damages so large they could have put it out of business, is seen as a benchmark for other copyright cases against AI firms, though legal experts caution it addresses only the narrow issue of using digital libraries of pirated books. However, U.S. District Court Judge William Alsup sharply criticized the proposed agreement as incomplete, saying he felt “misled” and warning that class lawyers may be pushing a deal “down the throat of authors.” He has delayed approving the settlement until lawyers provide more details. You can read more about the initial settlement from my colleague Beatrice Nolan here in Fortune and about the judge’s rejection of it here from Bloomberg Law.

Meanwhile, authors file copyright infringement lawsuit against Apple for AI training. Two authors, Grady Hendrix and Jennifer Roberson, have filed a lawsuit against Apple alleging the company used pirated copies of their books to train its OpenELM AI models without permission or compensation. The complaint claims Applebot accessed “shadow libraries” of copyrighted works. Apple was not immediately available to respond to the author’s allegations. You can read more from Engadget here

OpenAI says it will burn through $115 billion by 2029. That’s according to a story in The Information which cited figures provided to the company’s investors. That cash burn is about $80 billion higher than previous forecasts from the company. Much of the jump in costs has to do with the enormous amounts OpenAI is spending on cloud computing to train its AI models, although it is also facing higher-than-previously-estimated costs for inference, or running AI models once trained. The only good news is that the company said it expected to be bringing in $200 billion in revenues by 2030, 15% more than previously forecast, and it is predicting 80% to 85% gross margins on its free ChatGPT products.

OpenAI scrambling to secure restructuring deal. The company is even considering the « nuclear option » of leaving California in order to pull off the corporate restructuring, according to The Wall Street Journal, although the company denies any plans to leave the state. At stake is about $19 billion in funding—nearly half of what OpenAI raised in the past year—which could be withdrawn by investors if the restructuring is not completed by year’s end. The company is facing stiff opposition from dozens of California nonprofits, labor unions, and philanthropies as well as investigations from both the California and Delaware attorney generals.

OpenAI strikes $10 billion deal with Broadcom to build its own AI chips. The deal will see Broadcom build customized AI chips and server racks for AI company, which is seeking to reduce its dependency on Nvidia GPUs and on the cloud infrastructure provided by its partner and investor Microsoft. The move could help OpenAI reduce costs (see item above about its colossal cash burn). CEO Sam Altman has also repeatedly warned that a global shortage of Nvidia GPUs was slowing progress, pushing OpenAI to pursue alternative hardware solutions alongside cloud deals with Oracle and Google. Broadcom confirmed the new customer during its earnings call, helping send its shares up nearly 11% as it projected the order would significantly boost revenue starting in 2026. Read more from The Wall Street Journal here.

OpenAI plans animated feature film to convince Hollywood to use its tech. The film, to be called Critterz, will be made largely with its AI tools including GPT-5, in a bid to prove generative AI can compete with big-budget Hollywood productions. The movie, created with partners Native Foreign and Vertigo Films, is being produced in just nine months on a budget under $30 million—far less than typical animated features—and is slated to debut at Cannes before a global 2026 release. The project aims to win over a film industry skeptical of generative AI, amid concerns about the technology’s legal, creative, and cultural implications. Read more from The Verge here.

ASML invests €1.3 billion in French AI company Mistral. The Dutch company, which makes equipment essential for the production of advanced computer chips, becomes Mistral’s largest shareholder as of a €1.7 billion ($2 billion) funding round that values the two-year-old AI firm at nearly €12 billion. The partnership links Europe’s most valuable semiconductor equipment manufacturer with its leading AI start-up, as the region increasingly looks to reduce its reliance on U.S. technology. Mistral says the deal will help it move beyond generic AI functions, while ASML plans to apply Mistral’s expertise to enhance its chipmaking tools and offerings. More from the Financial Times here.

Anthropic endorses new California AI bill. Anthropic has become the first AI company to endorse California’s Senate Bill 53 (SB53), a proposed AI law that would require frontier AI developers to publish safety frameworks, disclose catastrophic risk assessments, report incidents, and protect whistleblowers. The company says the legislation, shaped by lessons from last year’s failed SB 1047, strikes the right balance by mandating transparency without imposing rigid technical rules. While Anthropic maintains that federal oversight is preferable, it argues SB 53 creates a vital “trust but verify” standard to keep powerful AI development safe and accountable. Read Anthropic’s blog on the endorsement here.

EYE ON AI RESEARCH

OpenAI researchers say they’ve found a way to cut hallucinations. A team from OpenAI says it believes one reason AI models hallucinate so often is that during the phase of training in which they are refined through human feedback and evaluated on various benchmarks, they are penalized for declining to answer a question due to uncertainty. Conversely, the models are generally not rewarded for expressing doubt, omitting dubious details, or requesting clarification. In fact, most evaluation metrics either only look at overall accuracy, frequently on multiple choice exams—or, even worse, provide a binary “thumbs up” or “thumbs down” on an answer. These kinds of metrics, the OpenAI researchers warn, reward overconfident “best guess” answers.

To correct this, the OpenAI researchers propose three fixes. First, they say a model should be given explicit confidence thresholds for its answers and told not to answer unless that threshold is crossed. Next, they recommend that model benchmarks incorporate confidence targets and that the evaluations deduct points for incorrect answers in their scoring—which means the models will be penalized for guessing. Finally, they suggest the models be trained to craft the most useful response that crosses the minimal confidence threshold—to avoid the model learning to err on the side of not answering in more circumstances than warranted.

It’s not clear that these strategies would eliminate hallucinations completely. The models still don’t have an inherent understanding of the difference between truth and fiction, no sense of which sources are more trustworthy than others, and no grounding of its knowledge in real world experience. But these techniques might go a long way towards reducing fabrications and inaccuracies. You can read the OpenAI paper here.

AI CALENDAR

Sept. 8-10: Fortune Brainstorm Tech, Park City, Utah.

Oct. 6-10: World AI Week, Amsterdam

Oct. 21-22: TedAI San Francisco.

Dec. 2-7: NeurIPS, San Diego

Dec. 8-9: Fortune Brainstorm AI San Francisco. Apply to attend here.

BRAIN FOOD

Why hasn’t teaching adapted? If businesses are still struggling to find the killer use cases for generative AI, kids have no such angst. They know the killer use case: cheating on your homework. It’s depressing but not surprising to read an essay in The Atlantic from a current high school student, Ashanty Rosario, who describes how her fellow classmates are using ChatGPT to avoid having to do the hard work of analyzing literature or puzzling out how to solve math problem sets. You hear stories like this all the time now. And if you talk to anyone who teaches high school or, particularly, university students, it’s hard not to conclude that AI is the death of education.

But what I do find surprising—and perhaps even more depressing—is why, almost three years after the debut of ChatGPT, more educators haven’t fundamentally changed the way they teach and assess students. Rosario nails it in her essay. As she says, teachers could start assessing students in ways that are far more difficult to game with AI, such as giving oral exams or relying far more on the arguments students make during in-class discussion and debate. They could rely more on in-class presentations or “portfolio-based” assessments, rather than on research reports produced at home. “Students could be encouraged to reflect on their own work—using learning journals or discussion to express their struggles, approaches, and lessons learned after each assignment,” she writes.

I agree completely. Three years after ChatGPT, students have certainly learned and adapted to the tech. Why haven’t teachers?